Prompt to Production: What It Really Means for a Website to Be Auto-Tested Before Launch

Rajesh P

March 17, 2026 · 8 min read

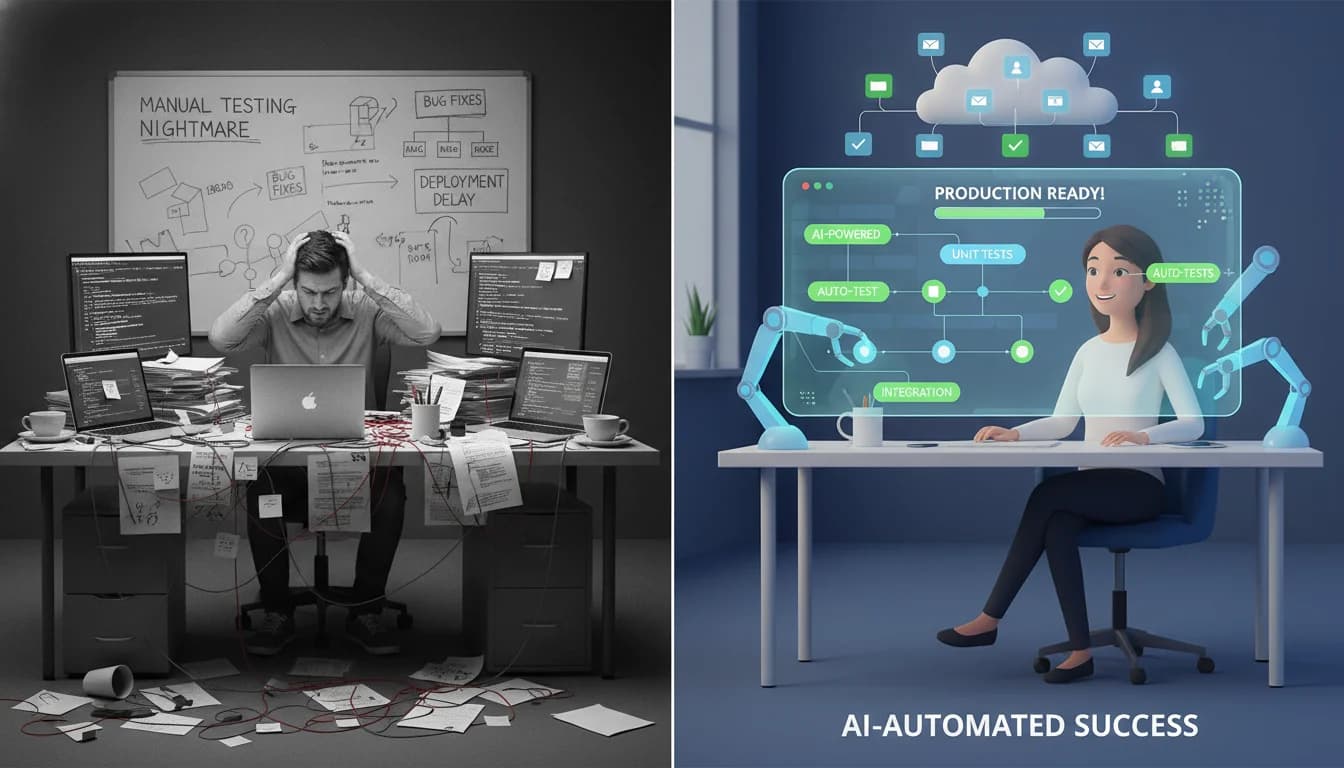

You hit publish on your AI-built site, share the link in a group chat, and within the hour someone tells you the signup form doesn't work. It did in the editor preview. It doesn't in production. You're now wondering whether any AI website builder with automated testing actually exists, or whether you're just expected to click through every page yourself before every launch.

The short answer is that most builders don't test anything before publishing. They generate code and push it live. Whether it works is something you discover after the fact. A few builders have started changing this, but the phrase 'auto-tested' gets used loosely enough that it's worth being specific about what it actually means and what it doesn't.

This post breaks down the mechanics: what happens between prompt and production in a typical AI builder, where the gaps are, and what a real automated testing layer looks like before a site goes live.

What 'Published' Actually Means (and What It Doesn't)

AI No-Code Website Builder

Build it with CodePup AI. Ready in 30 minutes.

When you click publish in most AI website builders, the builder takes the generated code and deploys it. That's the full process. There's no checkpoint between 'the AI wrote this' and 'it's live for your users.' The code goes from the model's output directly to your production URL.

This isn't unique to AI builders. Traditional no-code tools work the same way. But AI-generated code has a specific failure pattern: it can look entirely correct and still break under real user conditions. The checkout form works in the editor preview, which runs with a test API key and no real network latency. In production, it hits a Stripe webhook that expects a specific payload shape the AI got slightly wrong. You don't know this until a customer's payment fails silently and they email you three days later.

The editor preview is not a test. It's a visual check. It confirms the page renders. It says nothing about whether the underlying logic works with real data, real auth states, and real external services.

"I launched on a Monday. By Wednesday I'd had four people tell me the signup form didn't work. The builder showed me a green checkmark. It had no idea the form was broken." — Founder, Indie Hackers, January 2026

Why AI Website Builders Skip Testing by Default

Testing costs time and compute. For a tool built around speed — describe it and it's live in minutes — adding a test layer introduces friction that most builders have decided isn't worth it. The value proposition is fast generation. A test suite that catches regressions is a different product with a different cost model.

There's also a structural problem. Writing tests requires knowing what correct behavior looks like. An AI builder generating code from a prompt knows what the user asked for, but it doesn't always encode that intent as a formal, checkable assertion. It has no built-in way to automatically say 'the checkout must complete and write an order record to the database' unless it was explicitly designed to do that. Most weren't.

- Most builders have no test runner in their deployment pipeline at all.

- Preview environments don't replicate real network conditions, auth states, or database constraints.

- AI-generated code can be syntactically valid and functionally broken at the same time.

- Without a test suite, there's no way to know if a new change broke an existing feature.

- The AI reports a change as successful whether or not it actually works end to end.

The result is that most AI-generated sites ship with zero automated verification. The builder did its job: it generated code. Whether that code works in production is left entirely to you.

What Auto-Testing Actually Looks Like Before a Site Goes Live

A site that's genuinely auto-tested before launch has had a suite of assertions run against it that verify real user behavior, not just that the code compiled. If you're trying to figure out how to know if an AI generated website actually works, this is what you're looking for.

- 1Unit tests verify that individual functions return correct output. A price calculation that adds tax incorrectly. A form validator that accepts an invalid email format. These run in milliseconds and catch logic errors before anyone loads the page.

- 2Integration tests verify that your site's components work together. The auth system correctly writes a user record to the database. The webhook handler receives a Stripe event and updates the order status. These catch the connection points where AI-generated code most often breaks.

- 3End-to-end tests simulate a real user completing a full journey. A visitor lands on the page, adds a product to their cart, enters payment details, and checks out. The order appears in the database. A confirmation email sends. If any step in that chain fails, the test fails and the broken version never ships.

The critical phrase is 'before it ships.' Tests that run after publish are monitoring, not quality gates. Auto-testing before launch means the broken version never reaches your users at all. You get a report of what failed, not a support email from a frustrated customer.

How CodePup's Auto-Testing Works Before Every Publish

CodePup generates tests alongside every feature it builds. When it creates a checkout flow, it also creates tests that verify the checkout works end to end. When it adds a contact form, it generates assertions that confirm the form submits correctly and the data lands in the right place. You don't write these tests. They're produced as part of the build, automatically.

Before every publish, CodePup runs the full test suite against your site. If a change breaks an existing behavior, the test catches it before the broken version goes live. You see a clear failure report. The working version stays live while the issue gets resolved. Your users never see the broken state.

Changes are also scoped. When you ask for a modification, CodePup edits only the components that need to change rather than regenerating whole pages. A smaller change surface means fewer chances of breaking something unrelated, and the test suite catches anything that slips through anyway. The two mechanisms work together.

When a CodePup user added a HeyGen video integration to their landing page, the test suite automatically verified that the existing signup form, contact page, and Stripe checkout were unaffected. The integration shipped clean. No manual re-testing required.

What Gets Caught Before You Ever See It

These are the most common failures in AI-built sites. With no-code website testing automation in place, none of them reach production:

- Checkout flow breaks after a layout change because the submit handler lost its reference to the cart state.

- Login redirects to a blank page after an auth configuration update changed the session token format.

- Contact form submits successfully in the UI but silently fails to write to the database because a required field changed type.

- Webhook handler stops processing payments after a code update changed the expected payload structure.

- A new page causes a 404 on an existing linked page because the route pattern was modified during generation.

- Signup confirmation email stops sending after an SMTP configuration update the AI didn't propagate correctly.

Every one of these is a real failure mode in AI-built sites. Each is caught by integration and end-to-end tests in under two minutes. Without those tests, you find out from users.

The difference between a site that holds up and one that needs constant manual patching isn't the quality of the AI. It's whether anyone built a verification layer around the AI's output. In 2026, that's what no-code website testing automation actually means: not a human QA pass, but an automated suite that runs every time something changes and stops broken code from reaching your users.

Most AI builders ship code straight to production with no verification step. CodePup runs a full test suite before every publish so breakage gets caught before your users see it. Build your site on CodePup and launch knowing it actually works.

Ready to build this?

Start with a template built for your use case.

More from the blog

Ready to build with CodePup AI?

Generate a complete, tested website or app from a single prompt.

Start Building